Kainmueller Lab

Biomedical Image Analysis

Profile

We focus on capturing biological prior knowledge in machine learning models for accurate cell segmentation, annotation, and tracking, and we develop computationally efficient solvers for the underlying optimization problems. For example, epithelial cells have approximately polygonal shapes and they form honeycomb-like grids. Our methods exploit such knowledge for improved segmentation accuracy.

Team

Research

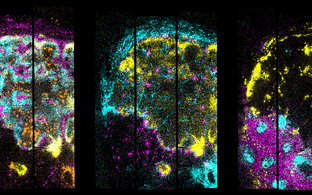

In some model organisms, such as the nematode worm C. elegans, individuals are exact copies of each other in terms of the number and function of their cell nuclei. This remarkable property allows for cell-level observations of gene expression made in different individuals to be merged into a common reference atlas. Establishing an exhaustive atlas of gene expression at the cellular level will pave the way towards a fundamental understanding of how the genome of an organism encodes its development and function. However, to merge observations from different individuals into a reference atlas, cells have to be annotated, i.e. labelled with their unique biological names, in microscopy images.

This is a hard and time-consuming task even for trained anatomists. In the C. elegans project, the lab works on reducing the amount of required expert annotations to a viable minimum by not just automating the annotation task, but furthermore by training the underlying machine learning model in an unsupervised manner, i.e. without the need for manually annotated examples to learn from. The lab aims at phrasing such unsupervised training as a combinatorial optimization problem and developing a respective solver.

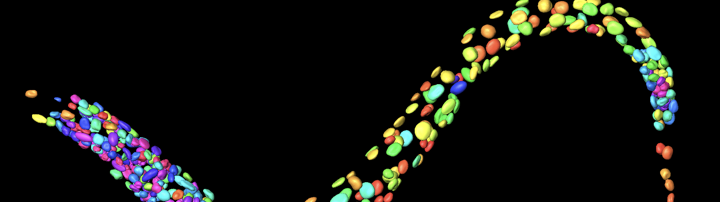

Similar to C. elegans, the brain of the fruit fly Drosophila is stereotyped at the level of individual neurons. The lab’s work on Drosophila aims at automated matching of in-vivo observations of neuron function in light microscopy to detailed observations of neuron connectivity in electron microscopy, and vice-versa.